Do you wanna know about Neurons in Artificial Neural Network? if yes, then give your few minutes to this article. In this article, I will tell you all details related to Neurons in an Artificial Neural Network.

Hello, & Welcome!

In this blog, I am gonna tell you-

- Neurons in Human Brain.

- Neurons in Artificial Neural Network.

The neuron is the basic building block of artificial neural networks. So let’s understand first, neurons in the human brain.

Neurons in Human Brain.

The human brain neurons look something like that. There are some tales, some circles, and branches coming out of them as you can see. But the question is how we can recreate it on a machine? because we really need to recreate it to the machine. The whole idea or purpose of deep learning is to mimic the human brain. This purpose is because the human brain is the most powerful learning tool on the planet. So in deep learning, they try to make machines brain as powerful as a human’s brain.

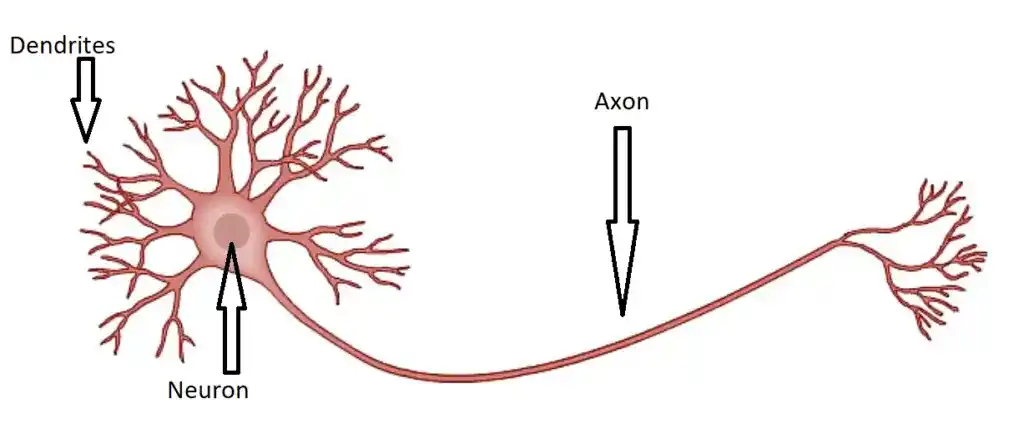

This is the neuron. Here you can see the main body as a Neuron. Some branches as Dendrites and the long tail of Neuron is Axon. So what all dendrites, axons, and neurons do?. So the key point to understand here is that the single neuron is useless. This single neuron is just like an ant. A single ant can’t do so much. Similarly, a single neuron is just useless. So when you have a bunch of neurons, they can do magic.

So the next question is how they work together?

For that dendrites and axons are there. A dendrite is the receiver of a signal for neurons. And Axons are the transmitter of a signal. The dendrites are connected to the axons of another neuron. So the signal of the first neuron travels down with its axon and connects to the dendrite of the second neuron. That’s how they are connected and work together.

So this is all about human neurons. Now let’s move into artificial neurons.

Neurons in Artificial Neural Network.

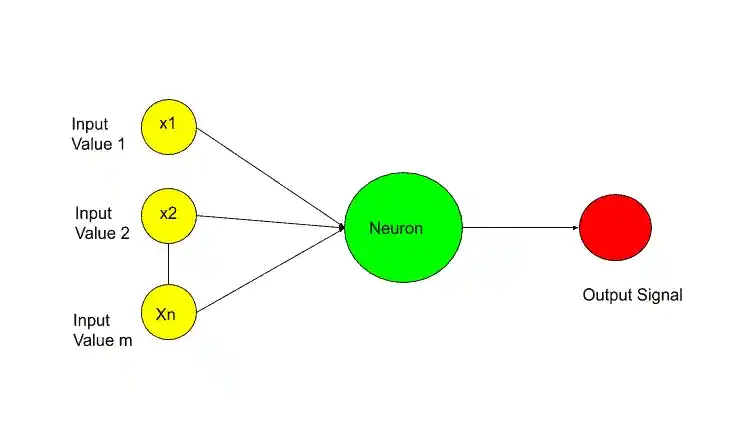

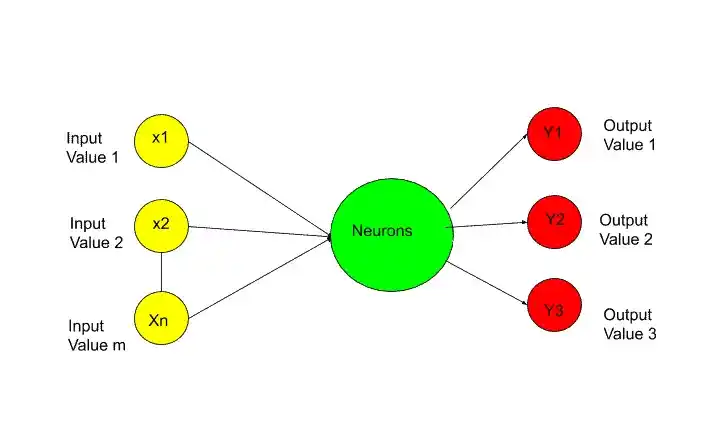

So, here the green circle as you can see in the image is the neuron. This neuron is also known as a node. This neuron gets some input signals. And it has an output signal. Like dendrites and axons in human brains. I represent these input signals as other neurons in the image.

Here, I stick with some color coding, so that you can understand easily 🙂 where yellow neuron means the Input layer. Therefore, the green neuron is getting signals from yellow neurons. These signals are just an input value in the same way in simple linear regression. In simple linear regression, you have input value and output value. The same in neurons. So the red one is the output value and yellow ones are input value.

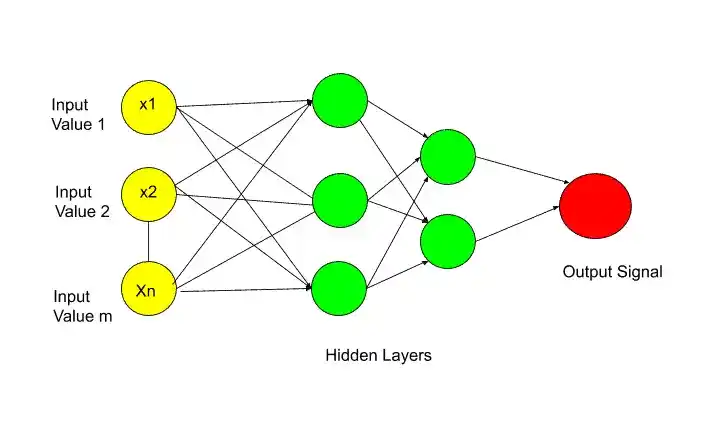

In the previous image, the green neurons are getting signals from the input layer(yellow) but in many cases, they take signals from other hidden layer neurons, the green ones something like that-

In this image, there are two hidden layers. So the last green hidden layer is taking signals from the previous green hidden layer. But the concept is the same as I told you previously.

Now Let’s describe each layer in detail. So the first layer is Input Layer.

Input Layer-

I think now you have a question in your mind that What signals are pass through the Input layer?.

So in terms of the human brain, these input signals are your senses. These senses are whatever you can see, hear, smells, or touch. For example, if you touch some hot surface, then suddenly signal sent to your brain. And that signal is the Input signal in terms of the human brain.

But,

In terms of an artificial neural network, the input layer contains independent variables. So the independent variable 1, independent variable 2, and independent variable n. The important thing you need to remember is that these independent variables are for one observation. In more simple words, suppose there are different independent variables like person’s age, salary, and job role. So take all these independent variables for one person or one row.

Another important thing you need to know that, you need to perform some standardization or normalization on these independent variables. It depends upon the scenario. The main purpose of doing standardization or normalization is to make all values in the same range.

Now let’s move on the next layer and that is-

Output Layer-

So, the next question is What can be the output value?

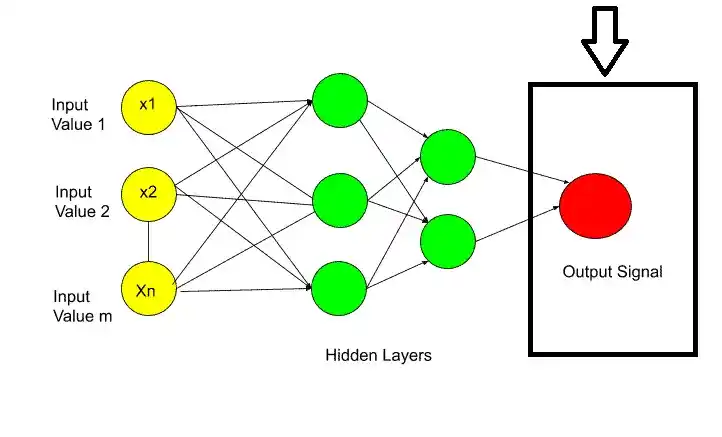

The answer is the output value can be-

- Continous( Like price).

- Binary( in Yes/no form).

- Categorical variable.

If the output value is categorical then the important thing is, in that case, your output value is not one. It may be more than one output value. As I have shown in the picture.

Next, I will discuss synapses.

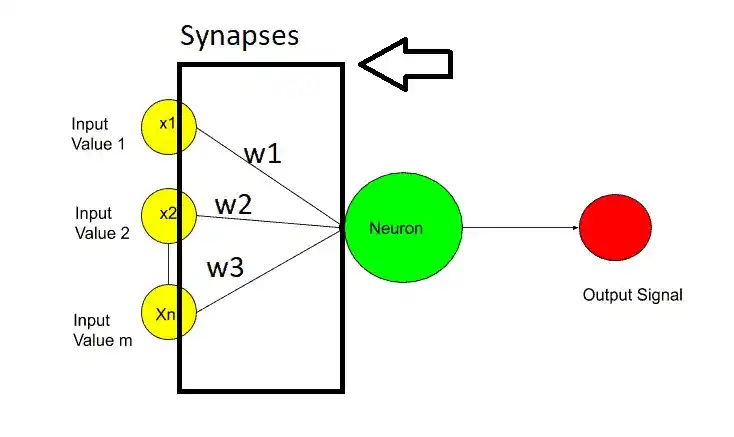

Synapses-

Synapses are nothing but the connecting lines between two layers.

In synapses, weights are assigned to each synapse. These weights are crucial for artificial neural networks work. Weights are how neural networks learn. By adjusting the weights neural network decides what signal is important and what signal is not important. With the help of weights, the neural network decides which signal should pass to the next layer and which shouldn’t.

When you are training the model, you basically adjusting the weights across the network in all the synapses. And here the Gradient Descent and backpropagation come into the place.

Now the last but not the least layer is Hidden Layer.

Hidden Layer or Neuron-

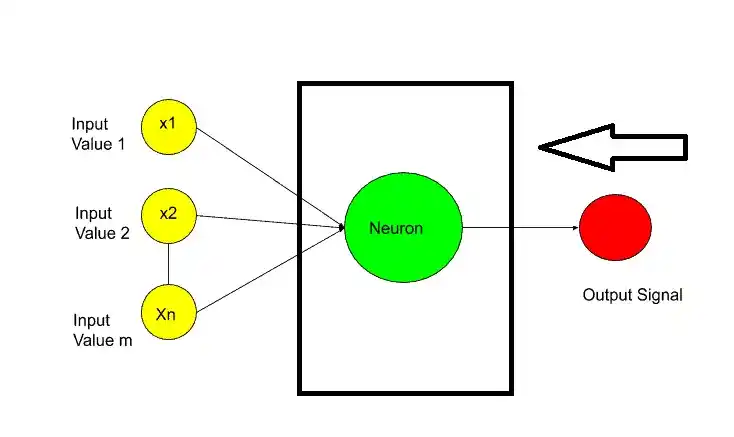

So the next question is What Happens inside the neurons?

Inside the neurons, the two main important steps happen-

- Weighted Sum.

- Activation Function.

The first step is the weighted sum, which means all of the weights assigned to the synapses are added with input values. Something like that-

[ x1.w1+x2.w2+x3.w3+………………..Xn.Wn]

After calculating the weighted sum, the activation function is applied to this weighted sum. And then neuron decides whether to send this signal to the next layer or not.

So, that’s all about Neurons in an Artificial Neural Network. I hope now you have a clear idea about Neurons and its whole procedure. If you have any questions, feel free to ask me in the comment section.

Enjoy Learning!

All the Best!

Learn Deep Learning Basics Here.

Thank YOU!

Though of the Day…

‘ It’s what you learn after you know it all that counts.’

– John Wooden

Written By Aqsa Zafar

Aqsa Zafar is a Ph.D. scholar in Machine Learning at Dayananda Sagar University, specializing in Natural Language Processing and Deep Learning. She has published research in AI applications for mental health and actively shares insights on data science, machine learning, and generative AI through MLTUT. With a strong background in computer science (B.Tech and M.Tech), Aqsa combines academic expertise with practical experience to help learners and professionals understand and apply AI in real-world scenarios.